Submitted by Philip Storry on

I can't remember the last time I received a file from someone that I couldn't use.

These days, the file formats we use are pretty standardised. PDF files, Word documents, JPGs, MP3s, MP4s and so on - unless the person sending you it is a specialist in some area you actually have very good odds that any file you're sent will be readable.

Of course, it wasn't always so. But it was once much worse than just having different file formats. WordPerfect vs Wordstar vs Word was just an argument between companies. Once, it was a battle between computer hardware and countries.

Let's get down to basics - to a computer, everything is a number.

Everything.

Every letter you see on this page is a number to a computer. If you use your browser's "Find in page" feature, you think it's matching letters - but it's really matching numbers. Programmers all around the world have built entire libraries of computer code that allows them to believe that they are working with letters when they write software - but they are actually always using numbers.

So what defines which number is used for each letter?

These days, it's something called Unicode. But originally, people just made it up.

Yes, you read that correctly. They just made it up.

It didn't matter in the early days of computing, because you were very unlikely to exchange files with any other computers. They didn't have a network connection, and data input with via tapes or punched cards - hardly convenient.

But as computers began to be able to exchange data, knowing whether number 65 meant "a" or "A" became important. (I'm assuming your computer could manage lowercase and uppercase, of course!)

A standard was proposed in America called ASCII, which many computers used - which should have brought some simplicity to everything. Except ASCII only defined the first 128 characters (7 bits). This made sense at the time as some computers used 7-bit text.

However, very soon most computers were 8 bit. So what was to be done with those extra 128 characters?

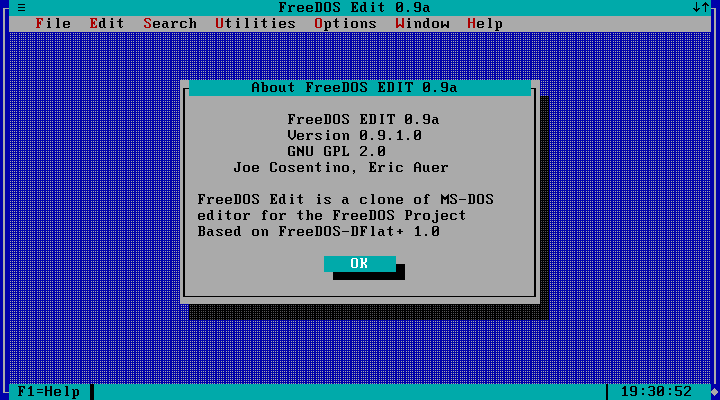

Well, it was a free-for-all. Every vendor used them whichever way they liked. In the US, they were used for drawing characters, so that you could create pretty boxes like this:

Note the lines around the edges of the box - all the characters for that are in the upper part of the character space, and might be different from machine to machine.

But European countries needed accented characters, so they'd often ditch those box drawing characters in favour of useful characters that aren't used in the US/UK alphabet.

This meant that, for a while, it was possible to send or receive a simple text file - in theory the most basic file possible - and have it not read correctly for the recipient.

The "solution" was code pages. Defined sets of characters for use with certain technologies or regions. The US had code page 437, Western Europe had code page 850, Windows took code page 1252.

They'd have to change their codepage, using the commands MODE and CHCP - after making sure that they had the correct code page installed!

Code pages actually originated on mainframes, but I encountered them on MS-DOS. In the 1980's, you were bound to eventually get a text file that wouldn't display correctly. And when you moved up to Windows, with its different code page, any old text files that used box drawing to outline headers would look very odd.

Of course, time moves on and these days code pages aren't needed - modern computing platforms use Unicode throughout. But I can still remember how to change code pages - and I didn't need to look up the ones I quoted earlier in this article. Today they are just so many wasted neurons...